Introduction

As the organization grows organically or in-organically, there will be an infusion of multiple technologies, loss of governance and a drift away from the centralized model. In some organizations where the BI environment is not robust, there will be an increased usage of MS Excel and MS Access to meet various business needs like cross-functional reporting, dashboards, etc.

When the organization is small, one can cater to the business requirements by manually crunching numbers. But as it grows, factors like localization, compliance, availability and skill set of resources make it unmanageable and tedious. Similarly, when the usage of Excel spreadsheets grow the complexity also grows along with it (macros, interlinked excels, etc.)

There are various models and frameworks for rationalization available in the industry today. Each model designed to address a specific problem and offer an excellent short-term solution. Interestingly all these industry models lack two important factors. A governance component and pillars/enablers to sustain the effort and add value to the client.

Rationalization – The piece meal problem:

When the environment slowly starts to become unmanageable, organizations look towards rationalization as a solution. Some of the factors that lead to the need for rationalization are:

- Compliance issues

- License fees consuming a large portion of the revenue

- Consolidation of operations is a bottleneck

- Data inconsistency start to creep in

- Integration post merger or acquisition

- Analysts spending more time validating the data rather than analyzing the information

Generally organizations identify what needs rationalization based on what is impacting their revenue or productivity the most. E.g. Tool license fee. Consultants are then called to fix that particular problem and move on. There are two issues here:

- First, the consultant concentrates only on the problem at hand and does not validate the environment for the root cause to fix it permanently

- Second, the framework used by the consultant fixes the problem perfectly from a short-term perspective and does not guarantee a long-term solution

The Solution:

Rationalization models in the industry today needs an update for inclusion of a governance component to help assess, rectify and sustain the rationalization effort in the long run. In addition to this, enabling components needs to be identified and included to add value to the overall exercise.

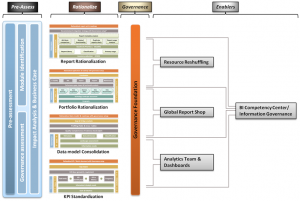

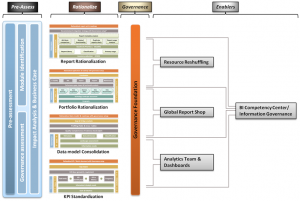

Figure 1

Figure 1

A typical rationalization model with governance component and post rationalization enablers would look as shown in Figure 2. This model is not comprehensive and shows only the most common rationalization scenarios.

Pre-Rationalization Assessment

A pre-assessment when conducted would evaluate if the requested rationalization is all that is required to fix the existing problems or something more is needed for a permanent fix. Typically a root cause analysis helps identify the actual reason behind the current scenario. Along with this, the existing governance maturity and value creation opportunities are also identified to help enhance the user experience, adoption, sustainability and stability of the environment.

A simple excel questionnaire should help initiate the pre-assessment phase (Contact the author if you are interested in this excel) without dwelling deep in trying to understand the intricacies in the environment. Based on the assessment findings, the course of the rationalization exercise can be altered. For example, a formal Data Governance program kicked off in parallel.

Rationalize

This is the phase where the actual rationalization takes place using customized models and frameworks. Complete inventory gathering, metadata analysis, business discussions to understand the needs, etc. is performed. At this point, the client’s need from a short-term perspective is met. Sustainability of the environment would still be questionable.

Governance Component

The depth of coverage for the governance component is to be mutually decided between the client and consultant based on environmental factors. Parameters like size of the IT team, existing processes and policies, maturity, etc. play a major role in deciding the depth required.

The governance component introduced within the rationalization framework would typically deep-dive into the system to understand the policies & standards, roles & responsibilities, etc from various perspectives to fix or find a work-around to the problem and ensure the scenario does not repeat itself.

Enablers

Post rationalization enablers have no dependency on either the rationalization effort or the governance component. This component is kicked off post the rationalization phase either as a separate project or as an extension to the rationalization phase. The enabling components though they are optional, will play a vital role in adding value to the client by sustaining the setup from a long-term perspective and user adoption perspective. On occasions where more than one enabler has been identified, it is sufficient if the key enabler is addressed on priority.

For example, if the client requests for report rationalization, there is a high probability that the data model was not flexible and hence the users were creating multiple reports or there was a training issue. This would have got identified during the pre-assessment phase and can be addressed as part of this phase by setting up something like a Global Report Shop. The governance component would have helped address a part of this issue by ensuring policies are in place to see that the users do not create reports at will and at the same time they are directed to the right team or process to meet their requirements.

Taking another example, if the client goes for a KPI and metrics standardization exercise, there is a good chance that there is a need for data model changes, dashboards to be recreated and analytic reports to be designed from scratch. This can be handled by setting up a core analytics team well in advance. If this was not identified and addressed as part of the KPI standardization project, users day-to-day activities would get hampered and result in poor adoption.

Benefits

Primary advantages of this rationalization model are:

- Maximum revenue realization in a short duration

- Helps sustain the quality of the environment

- Enhances users productivity and adoption

Conclusion

Rationalization must be seen from a broader and long-term perspective. Rationalization without addressing the governance component will not be strong and one without any supporting pillars like the enablers mentioned will not serve the long-term purpose.

Posted: June 18th, 2011

Categories:

Business Intelligence

Tags:

consolidation,

enablers,

framework,

governance,

KPI,

model,

portfolio,

rationalization,

report,

standardization

Comments:

7 Comments.

What is Hadoop!

Apache Hadoop is an open source Java framework for processing and querying vast amounts of data (Multi Petabytes) on large clusters of commodity hardware. The original concept behind Hadoop comes from Google’s BigTable. Hadoop is an initiative started and led by Yahoo! Today Apache Hadoop has become an enterprise-ready cloud computing technology and is becoming the industry de-facto framework for big data processing.

Yahoo! runs the world’s largest Hadoop clusters. They work with academic institutions and other large corporations on advanced cloud computing research. Yahoo engineers are the leading participants in the Hadoop community.

Why Hadoop?

Primary goal of Hadoop is to reduce the impact of a rack power outage or switch failure so that even if these events occur, the data may still be readable. This kind of reliability is achieved by replicating the data across multiple hosts, and removing the need for expensive RAID storage on hosts. To add to this, the replication and node failures are handled automatically.

Another thing unique to Big Data is, unlike in traditional data warehouses where IO operations take a major chunk of the time i.e. bringing data to the server for processing, here the data locations are exposed, and processing is sent to the place where data resides. This provides a very high aggregate bandwidth. Think of sending a 2MB Jar file to the place where data resides against bringing 2GB of data to the server for processing.

Hadoop support and tools are available from major enterprise players, such as Amazon, IBM and others. Almost every big internet company like facebook, NY Times, last.fm, Netflix, etc. are using Hadoop to some extent.

When and where can Hadoop be used?

When processing can easily be made parallel (certain types of sort algorithms), running batch jobs is acceptable, access to lots of cheap hardware is easy and there are no real-time data / user facing requirements like Document Analysis & Indexing, Web Graphs and Crawling, Hadoop can be used.

Applications that require a high degree of parallel data intensive distributed operation like very large production deployments (GRID), processing large amounts of unstructured data will also find Hadoop to be a best fit.

Having said this, one should also know where it should not be used. Below are a few points on the same:

- HDFS is not designed for low latency access to a huge number of small files

- Hadoop MapReduce is not designed for interactive applications

- HBase is not a relational database or a POSIX file system and does not have transactions or SQL support

- HDFS and HBase are not focused on security, encryption or multi-tenancy

- Hadoop is not a classical GRID solution

It is for these reasons it is said that Big Data cannot replace the traditional DW systems and can only co-exist to enhance or ease the bottlenecks which the traditional DWs pose in today’s environment. A few places (industries) where Hadoop is already being used are below:

- Modeling true risk (Insurance & Healthcare)

- Fraud detection (Insurance, Banking & Financial Services)

- Customer churn analysis (Retail)

- Recommendation engine (e-commerce & retail)

- Image processing and analysis (criminal database- face detection/matching)

- Trade surveillance (Stock Exchange)

- Genom analysis (Protein folding)

- Check-ins by users (Four Square, Gowalla, Trip advisor, etc)

- Sort large amounts of data

- Ad targeting (contextual ads)

- Point of sale analysis

- Network data analysis

- Search quality (Search engines)

- Internet archive processing

- Physics lab (E.g. Hardon collider, Switzerland – Generates 15PB of data per year)

How is Hadoop helping?

Hadoop implements a computational paradigm named Map/Reduce, where the application is divided into many small fragments of work, each of which may be executed or re-executed on any node in the cluster. In addition, it provides a distributed file system (HDFS) that stores data on the compute nodes, providing very high aggregate bandwidth across the cluster. Both Map/Reduce and the distributed file system are designed to handle node failures automatically as part of the framework.

Few Hadoop BuzzWords:

- Pig – High-level data-flow language and execution framework for parallel computation. It’s a platform for analyzing large data sets. Their structure is amenable to substantial parallelization, to enable them to handle very large data sets. It consists of a compiler that produces sequences of Map-Reduce programs

- ZooKeeper – High-performance coordination service for distributed applications. A centralized service for maintaining configuration information, naming, providing distributed synchronization, and providing group services. Primary work : Master election, Locate ROOT region, Region server membership

- Hive – Facilitates ad-hoc query analysis, data summarization and analysis of large datasets. Provides a simple query language called HiveQL which is based on SQL. Can be used by both SQL users and MapReduce experts.

- Hbase – Database. HBase is an open-source, distributed, versioned, column-oriented store modelled after Google’ s Bigtable (A Distributed Storage System for Structured data). HBase provides Bigtable like capabilities on top of Hadoop core. It is in Java and focused more on scalability and robustness. HBase is recommended when you have records that are very sparse and it also great for versioned data. It is not recommended for storing large amounts of binary data. Uses HDFS file system. Locates data by storing meta information like: Row:String, Column:String; Timestamp – data model

- MapReduce – Fundamental data filtering algorithm. (More about this in separate post)

- Cassandra – Cassandra was open sourced by Facebook in 2008. It is a highly scalable second-generation column-oriented distributed database. It brings together Dynamo’s fully distributed design and Bigtable’s Column Family-based data model.

- Oozie – Yahoo!’s workflow engine to manage and coordinate data processing jobs running on Hadoop, including HDFS, Pig and MapReduce. It is an extensible, scalable and data-aware service to orchestrate dependencies between jobs running on Hadoop

- Nutch – It is a crawler and search engine built on Lucene and Solr.

- Lucene – free text indexing and search engine

- Mahout – Apache Mahout is a scalable machine learning library that supports large data sets. It currently does: Collaborative Filtering, User & Item based recommendation, various types of clustering, frequent pattern mining, decision tree based classification, etc.

- Solr – High performance Enterprise search server

- Tika – Toolkit for detecting and extracting metadata and structured text content from various documents using existing parser libraries.

- Hypertable – Hypertable is an HBase alternative. Written in C++ and primarily focused on Performance. It is not designed to support transactional applications but is designed to power any high traffic website.

Difference between Pig and Hive:

Hive is supposed to be closer to a traditional RDBMS and will appeal more to a community comfortable with SQL. Hive is designed to store data in tables, with a managed schema. It is possible to integrate this with existing BI tools like MicroStrategy once the required drivers (e.g. ODBC) which are under development are in place.

On the other hand, Pig can be easier for someone who had no experience in SQL. Pig Latin is procedural, whereas SQL is declarative. A simple comparison:

In SQL:

insert into ValuableClicksPerDMA

select dma, count(*)

from geoinfo join (

select name, ipaddr

from users join clicks on (users.name = clicks.user)

where value > 0;

) using ipaddr

group by dma;

In Pig Latin:

Users = load ‘users’ as (name, age, ipaddr);

Clicks = load ‘clicks’ as (user, url, value);

ValuableClicks = filter Clicks by value > 0;

UserClicks = join Users by name, ValuableClicks by user;

Geoinfo = load ‘geoinfo’ as (ipaddr, dma);

UserGeo = join UserClicks by ipaddr, Geoinfo by ipaddr;

ByDMA = group UserGeo by dma;

ValuableClicksPerDMA = foreach ByDMA generate group, COUNT(UserGeo);

store ValuableClicksPerDMA into ‘ValuableClicksPerDMA’;

Source: http://developer.yahoo.com/blogs/hadoop/posts/2010/01/comparing_pig_latin_and_sql_fo/

Posted: May 2nd, 2011

Categories:

Big Data

Tags:

big data,

big table,

bigdata,

bigtable,

Cassandra,

distributed file system,

Hadoop,

Hbase,

HDFS,

hive,

hypertable,

mahout,

Map reduce,

MapReduce,

Nutch,

Solr,

tika,

zookeeper

Comments:

5 Comments.

What is Big Data?

In non-technical language as the name states, ‘Big data’ is the term used for voluminous data (structure, semi-structured and unstructured data). Unfortunately, many traditional tools are now capable of handling Terabyte to Petabytes of data and hence the definition of Big Data includes the ability to process this monster data also.

Now with some jargons, ‘Big data’ is used to store and query huge amounts of data (in the order of Petabytes and above) on large clusters of commodity hardware[1]. It does not need expensive storage devices like RAID or powerful systems like super computers. ‘Big data’ is horizontally scalable and fault tolerant with a high concurrency rate. It has a distributed database architecture[2] backbone to perform data-intensive distributed computing and can be setup either on a cluster of machines or on a single high-performance server.

Big Data is not exactly a disruptive force as quoted by a few people… though it has the potential to change the way we see and work with data warehouse. Big Data is here to complement the existing traditional Data Warehouse by helping organizations process enormous amounts of structured and unstructured data in multiple formats containing a wealth of information in a short time compared to traditional data warehouse.

Where is Big Data used or the most applicable?

- Tasks that require Batch data processing that is not real-time/user facing (e.g. Document Analysis and Indexing, Web Graphs and Crawling) can use Big Data

- Applications that require a high amount of parallel data intensive distributed computing requirement

- Big data apps are often also very industry specific and used in very large production deployments (GRID) like geological exploration in energy, genome research, medical research applications to predict disease and predicting terrorist threats

What can we do with Big Data?

If one has to categorize how various industries can leverage the Big Data concept, it would be as shown in the below table:

| Industry |

Big Data Purpose |

| Life Science |

Genome Analysis

Develop drug models |

| Healthcare |

Patient behaviour study to treat chronic diseases

Adverse drug effect analysis |

| Retail |

Contextual and targeted ad marketing

Point of Sale analysis

Product recommendation engine (E.g. Amazon)

Customer churn analysis |

| Insurance |

Risk modelling

Location Intelligence

Catastrophe Modelling and Mapping Services

Claims Fraud Detection and Incident Tracking |

| Banking & Financial Services |

Stock Exchange – Processing & surveillance of trade data

Credit card Fraud Detection |

| Government/Others |

Internet Archive (Approx. 20TB per month)

Hardon collider Switzerland (Approx. 15PB per year)

User check-ins (Four-square, Gowalla, etc.) |

As you can see in the table, big data can help in a big way with Fraud detection and prevention in the Financial Services sector, digital marketing optimization in sectors like Retail, Consumer Goods, Healthcare and Life Science. Big Data can also help organizations take strategic decisions by analyzing the vast amount of wealth available inside the Social networks. Post analysis, the data can be brought back into the DW and applied to production data for taking the necessary action.

For example if an online retailer’s customer always buys designer wear, search indexes can be constantly revised in the recommendation engine. A Hadoop-based system can scrub Web clicks and most popular search indexes, while the traditional data warehouse will need several years of integrated historical data.

When is it used?

- To process large amounts of semi-structured data like analyzing log files

- When your processing can easily be made parallel like a sorting of an entire countries census data

- Running batch jobs is acceptable. For example website crawling by search engines

- When you have access to lots of cheap hardware

When not to use Big Data?

If you are talking about data that can fit into memory and processed without too much of a trouble then Big Data is not for you. For example, up to a few TBs of data can be processed using existing tools like MySQL and does not require a Big Data backend. On the other hand, if someone is going to use Big Data to process say a few GBs or TBs of data, it means they have money to burn and time to waste.

Concepts/Buzzwords for BigData:

- Open source

- Fault tolerant systems

- Horizontally scalable

- Commodity hardware

- MapReduce Algorithm

- Multi Petabyte Datasets

- Open data format

- High throughput

- Move computation to data

- Column-oriented DBMS

- Massively Parallel Processing (MPP)

- Distributed File System

- Resource Description Framework (RDF)

- Data mining grids

Reference:

http://www.teradatamagazine.com/

http://en.wikipedia.org/wiki/

http://www.gigaom.com

http://www.stanford.edu/dept/itss/docs/oracle/10g/server.101/b10739/ds_concepts.htm

[1] Commodity hardware is nothing but large numbers of already available computing components from various vendors put together in clusters for parallel computing. This helps achieve maximum computation power at low costs.

[2] Set of databases in a distributed system that can appear to applications as a single data source.

Never even dreamt that I would do this one day. Let me date things back a little to explain where and how it all started.

My friend Bhuvan did all the research and mentioned that there is a place called Umm-Al-Quwain where we can do sky-dive and wanted to know if I was interested. It was hardly a month before our term exams and I knew, it’s now or an opportunity lost. So decided to go for it. On the day of the jump it was a mix of nervousness and excitement. Bhuvan, Rachita and myself reached the place at around 9am, signed a hand full of papers, got introduced to our trainer and finally all set inside the plane after a small training session. On reaching 11K feet, there was only one thing that crossed my mind. “I can’t believe I am doing this” 🙂

The moment I got off the plane and the AFF (Accelerated Free-fall) started, fear was not there in my head as I knew I had a good 50 seconds at least before I can hit the ground unlike in a bungee jump where you have 5 seconds. The tandem instructor did a couple of 360 degree turns and every time he did that, I could feel the pressure in my ears. Guess this is what people call as experiencing G’s. Once you reach the no drop zone, the parachutes get deployed and it feels like someone pressed the pause button in a video. It takes a while for you to realize that you are still loosing altitude as the air that was gushing past you at close to 200 kmph and now its somewhere around 60 kmph. The instructor asked me how it was like to get off the free-fall. Told him it looks like everything has come to a sudden halt and it is in slow motion now. He smiled and asked if I want to learn how to lose altitude fast in a parachute? Gave my obvious answer. Then he showed me the small grass land where we had to land. He said, the wind direction plays a major role in landing and as its good, he said, we will be able to drop altitude vertically for a few seconds and then start drifting towards the landing area. He then started to pull one side of the chute and we started to literally spiral down. We repeated that fiasco by pulling the other side of the chute also once and then I could see that we were really close to the grass strip.

So totally I had a 40 second AFF followed be a few minutes of gliding in the parachute. This is something that will definitely last a life-time for me. Once you are on the ground, you’ll feel like you have conquered something. And then you will ask, “Is that all? Can I do it again?” 😀 In simple words, it is a perfect blend of excitement, achievement, sense of calmness and clarity.

As usual, I called home post all my adventure to say I just jumped off a plane 😉 Somethings are to be kept as a surprise and this is one of them. I had to explain a couple of times before my dad actually understood what I was trying to tell him as he definitely didn’t expect me to do this. I’m sure by now my parents know they have one crazy devil at home.

[mudslide:picasa,1,jayakumar.rajaretnam,5120767935300913345,320,center]

So what’s next? Solo sky-dive. I’m sure this is a long way to go. But someday, I will have the certificate to say I have conquered the blue skies. And ya, it does not end here. I need to scuba dive too. Give me some nice places to do these two guys!!!

Posted: March 7th, 2011

Categories:

Adventure zone

Tags:

skydive,

uae

Comments:

2 Comments.

19th November 2004, 9pm: It was the day before I had to leave for US on my first onsite assignment. A hot day but the night was very pleasant in Noida. Decided to treat my friends in the PG I was staying and we went to Atta market. We saw this huge crane (120 ft high) lots of flood lights and people around. Got close to see whats going on and guess what! a bungee jump. Not a lot of takers so there was no queue at the ticket counter. I initially had no intention of doing it but this friend of mine (a sardar) was all excited and was like… JK let’s do it. It’s gonna be fun! you will not regret. We stood there for about 10 min to see at least one person do it. Finally one brave soul did it and we decided to go for it too.

All geared up and the elastic rope tied to my leg, I had to hop to a small compartment attached to the crane. At 120 ft, the night view of the atta market was simply amazing. The chill breeze flowing past me was a catalyst to the experience. I was now asked by the staff, “Do you want me to give you a push or are you going to jump yourself?”. I told her I will jump myself. The reason was I wanted to experience every single moment of it. A push might take it all away.

Trust me, this was the most difficult part. Hands stretched, legs tied and trying to tell your mind to push your body forward so your center of gravity can fall in front of you and you can make the jump is not easy at all. Every time I try to push myself forward, my legs will not allow and I just can’t do it. After about 15 seconds, I gave all what I could to push myself forward. The next few seconds, was bliss. At this moment, there were two things running in my head. First, “WTF am I doing???” and second, “why am I not able to feel the elastic tied to my leg??!!” 🙂 After a few seconds, when you reach a point where the elastic cord pulls you up, that’s when the rope tighten around your leg and you get your “life” back. I literally mean this.

Those who have stage fright should try this. When you go on stage the next time, just think of this moment and fear will no longer be in your eye. Called up home and told them what I did. Ya, it started off with my mom scolding me, “Is this the time to do such crazy things? You are traveling tomorrow and you are doing all this non-sense.” 🙂

Learning 1: You never know if opportunity will knock again. So when you have the chance, go for it.

Learning 2: If you want to do something crazy, do it first. Listen to no one but your instincts. Else it might be an opportunity lost.

Posted: March 6th, 2011

Categories:

Adventure zone

Tags:

Comments:

No Comments.

Till 1999, my New Year celebration went like this.

- Invite friends home and have a party*

- Go to a relatives place and have a party*

- Aimless drive around the city for a couple of hours… check out the lights, etc, etc and then have dinner at a good restaurant

- Sit at home and watch TV

*party = Dinner on the table.

Most of the time, its a “struggle” to stay awake till 12, unless there is some interesting program on TV. When the clock strikes 12, wish everyone and then in about 15min, crash. This 15min, can extend to about 30min, if we manage to get a call through to/from someone.

Year 2000, my first year in college; things didn’t change much as I was in Bangalore with my parents. Hostel life… first time out of house… you miss home so its acceptable to head home and spend time watching TV doing nothing. Second and third year in college (2001 and 2002), was a little different. I don’t know if I can say it was good as I ended up doing a return journey from Bangalore to Chennai by bus on New Year’s eve. On 1st Jan 2002 around 2:30am, I still remember seeing the shooting star sitting in the half empty bus, comfortable in my seat as I had both the seats for myself. This is when I wished that I will spend every New Year in a new place outside home. Now lets see how things progressed since then.

2003: Chennai

…with my roomy Shiva and his friends from Satyabama college. Pretty good for a start as we played some pool, a beer and drive around Chennai in bikes. Adventure actually started around 12:20am when we returned to besant nagar (our home). We realized that the complete besant nagar beach area was blocked for security reasons and no one can go to the beach. Which means, we cannot go to our own house which was right next to the beach. 🙁

We tried every possible street to our area and nope… it was all blocked, barricaded and guarded. Then we had to stay at a friends place for the night and head back home the next day morning around 6am. Quite a New Year… at least I was not at home. So the resolution was a success in the first year.

2004: Noida

Got placed in Noida after my ILP in TCS. First time for me in North India and to Hindi. As I reached Delhi just before Christmas, I was not put in any project. Me and my friends were free to move around and explore the place. My hindi knowledge was restricted to what I have heard from people and movies till then. But that did not hinder me much. Fun has no language barriers! A chilly New Year! No great fun! but we did have our time. Moreover, it was away from home as planned. Another successful year of keeping my 2002 New Year resolution.

2005: Wisconsin

Out of the country for the first time. Five guys left Waukesha county at about 7:30pm without dinner (hoping to get back by 2:30am and have dinner at home). Reached Milwaukee at around 9pm. One of my roomies, Rahul Bondre had done the home work of what pubs are there and we decided to go with what he said was good. He had picked two places. We parked our car in a nice place and then started to walk. Trust me.. walking at near sub zero temperature in windy weather is not good and only God knows what home work he did as we ended up walking from one place to the other and back for almost an hour. (Details for doing the back and forth between pubs is out-of-scope for this post). Then we reached a point where we decided to get in somewhere. Drove to another place closeby and at around 11:45pm we were at the start of a street. Each shop in that street was nothing but a bar or a pub. The first one was called “Corner Pub”. We decided to start here and then go where ever we wanted and eventually meet up at a particular point at 6am. 🙂

And yes… we all ended at the other end of the street at 6:30am. Things don’t end here. On reaching home we found that we hardly had anything to eat. Empty fridge and cupboards. Typical bachelors house… so no complaints. Now this was definitely one memorable New Year! and another successful year of withholding the tradition (2002 resolution).

2006: Mumbai

For some Knowledge transition work I had to go to TCS SEPZ office. Nice place.. Pretty close to IIT Powai and Hiranandani. I was there at Hiranandani with a friend of mine who was travelling to US the next day. Crowd was damn good. Just that moving around was a little difficult and I managed to get hold of a nice vantage point to see and enjoy the crowd. One happening and lively place. Mumbai Rocks! 😀

2007: Dehradun

This was another memorable trip to remember. Stayed at Shrish’s sisters place in Dehradun, visited Mussorie, Danolti, Auli and back in Dehradun for New Year’s eve at a nice hotel. You can read about this entire trip in one of my travelogues – The Great Auli Experience. This completes five successful years of holding on to my New Year resolution.

2008: Singapore

My MBA days… No words to explain. Term 2 for IT was heaven as we had finished most of the courses in Term 1 itself. Me and my friends had a ball of a time in Sentosa Island. It was a foam party! and that too by the beach side. Fireworks at 12 and beer flowing all over. Need I explain more???

2009: Chennai

Looks like my tradition of being in a new place just broke. But nothing to worry. This was a little different though I was not in a new place. We went to GRT Grand, then while heading back home (The Tree Story*) and then party at my house with Glen 😉 made this year Rock for me.

*The Tree Story is out of scope for this post.

2010: New York

A project at the last minute and that too in NY… just couldn’t get any better. Managed to get hold of my batch mate in NY. Though we were in office till well past 7pm, we managed to make it to Times Square on time. We could have gone to a hundred other places in NY, but we wanted to know what the big deal this ball drop was all about. Trust me… it’s the worst experience. Those buffoons could have at least kept some display or music to entertain the people who are standing like idiots to watch a ball drop.

Our luck, we had two females to give us company. One was cute nurse from CA and the other female was from NY but never witnessed the ball drop live so she had also come to know what the big deal was. Incidentally she ran in the NY mid night marathon the year before. If it was not for these two females, we would have actually given up and got out of Times Sq. much before 12. Then I caught up with my cousins who were at 55th and 8th ave. One awesome place. Thanks to Ashvini for picking the place “Barcelona Bar”. They sell some 100+ varieties of shots. 😀

After spending time with them, my friend Uphar and I got back to Jersey city, and continued the fun at my place.

2011: Bandipur, Karnataka

I was afraid I might end up at home and break the New Year code. But I got lucky as my friend Shrish was ready to check out some nice place for New Year’s eve. So I decided to create a theme for this New Year – Jungle Theme. Shrish, Apoorva (his wife), Aditya (his kid) and myself drove from Bangalore to Bandipur in his car. My car had just completed a Hampi trip and was not in a condition for this trip. NH13 highway sucked big time and took all the juice out of my poor lil car.

We had booked two log huts in a place called Vana vihar just before the start of the jungle. This was on the 30th December. The next day, we hit Gopalaswamy Betta then Wayanad, where we did a jungle safari followed by a self guided night safari in Mudhumalai. We then got back to Bandipur where a camp fire was waiting for us. I should say, camp fire after a very very long time and away from the city buzz was definitely a good way to celebrate new year. Will post a separate blog on this trip later. Keep a watch on my Travelogue section.

2012: Edison, New Jersey

One of the best New Year’s! Spent at home with everyone around… Playing & chatting till midnight with some wine 😉 Can’t ask for more.

2013: Ooty

During college days while visiting Ooty, we (Shrish and myself) used long for two things and this trip made it happen! One, we wanted to drive in bikes and two, we wanted to come with a girl (wife now). We drove to Ooty and then rented bikes. Shrish was staying in a hotel about 20 minutes from our place as he had booked it long back. We got an excellent place to stay (Berry Hills resort) close to the Tea factory. As it was newly opened, everything was good! except for the approach road. New Year party in Nahar hotel. Managed to check out Sinclair’s too on the way.

Oh btw, how can I forget to mention what happened in Dodabetta peak! My wife tells me, only mad people will have ice cream when it is cold and when I start having, she gets tempted and has a taste. After that you know what happened! She fell in love with ice creams in cold weather too 🙂

2014: Bangalore (Home)!

Guess the power of shooting stars last for a little over a decade only…. My 2002 New Year resolution ends today!

Posted: February 3rd, 2011

Categories:

Random Thoughts

Tags:

decade,

new year,

resolution

Comments:

2 Comments.

Focus on the concept. Not the name

Let me start with BICC and BI CoE. From what I have read till now in articles and books like “Business Intelligence for Dummies”, there is hardly any difference between the two. However, if there is a choice they prefer CoE over Competency Center. The reason quoted in the book “Business Intelligence for Dummies” By Swain Scheps is, the word “competence” has the connotation of bare minimum proficiency, giving the feeling of being damned by faint praise. Even though BICC will go well beyond mere proficiency, it sounds mere average.

Based on what I have been seeing till now, I would say there is more than a fine line of difference between BICC and BI COE. According to me, BI COE can be considered as a subset of BICC. While one can have a number of COEs each concentrating on a particular area like say, Data, Tools & Technology, process, etc. BICC is something that houses all these BI COEs under one single umbrella and manages them.

To bring in more clarity, these CoEs will manage the resources, define standards & processes and best practices within the scope of that CoE. Any framework or approach or methodology set up for monitoring the overall progress of these CoEs including shared assets, processes and technology will be performed by the BICC.

Now to create some controversy/initiate discussion here, lets bring in data governance (DG). Organizations either implement BICC or DG but not both. On a closer look, you will see that, these organizations that claim to implement DG and not BICC, over a period have actually expanded into what otherwise would have been done as part of a BICC initiative. As shown in the picture below, they started off with “Data” governance as the primary goal (Represented as point 1). Once the base was set, they realized that changes to other areas like infrastructure, tools, etc was needed and started to enter into that space and control them (Represented as point 2).

As you can see, point 3 is represented in dotted lines because it is not mandatory for the DG team to setup separate CoEs in this model. It’s a single, strong, core team (dedicated in some cases) that could have gone ahead and performed the job of various CoEs during the setup without actually setting up the CoE. In other cases, they would have setup small teams like one for technology as a whole and so on to quicken the process of implementation.

So to summarize, for me its something like this BICC > BI COE > Data Governance.

I am open to all kinds of feedback as I know I can be wrong. So please share your views on this topic.

Reference:

“Business Intelligence for Dummies” By Swain Scheps

http://www.executionmih.com/business-intelligence/competency-centre-competencies.php

Posted: January 17th, 2011

Categories:

Business Intelligence

Tags:

bicc,

bicoe,

dg

Comments:

No Comments.

The 3-Level approach

The 3-level approach (Figure 1) works well when the organization is serious, has the required funding, resources to execute and a mature Enterprise Information Management (EIM) setup.

In organizations where the IT team is pressurized to show tangible output for every penny invested, a 4-level approach as shown in Figure 2 can be adopted. The question, “Is it possible to divide this further into multiple levels?” might arise. The thumb rule is more the number of levels, the (substantial) tangible benefit business can see at every stage will reduce. So a faster turn-around with visible progress should be the mantra for a successful implementation.

The four level data governance implementation has been considered for this article as that’s more common in the industry today.

What do we need for Level-2?

To setup a ‘very basic’ DG practice leveraging the existing setup, a database with a few tables, some scripts to act as a trigger and an intranet portal should suffice. Note that this is just a starting point and hence focus would be on data quality and not data governance to begin with. As funds flow in, one can move from data quality monitoring to data quality control and from there to data governance.

Going online

The organizations existing intranet portal would be leveraged and the objective now is to take processes online one by one starting with the change management process. As part of Level-1 activities, the DG team would have already defined the process for change management and this would be used now. Steps to convert this process into something that can be monitored are as follows:

- Capture key fields to be used in the form. Example, Ticket ID, priority of change request, source & target systems affected, change description, etc.

- Design the form front end with the fields identified and link this with a database (MS Access, Oracle, MS SQL) at the backend. Mature organizations can leverage their SOA setup

- Define the process flow. Example, who raises the request, what happens next, who approves it, exceptions handling, etc. This detail would again come from the detail captured in the previous phase

- Now bring in the governance component to ensure that no change gets moved to production without going through this process

More the detail captured in step a, tighter will be the governance in step d. For example, if there is a field to capture the results of QA, automatically it will imply that the change that is moving to production has to move through QA (Dev – QA – Production). Assuming process has been defined to ensure QA compliance for approval of change request. Step c which talks about defining process flow can be accomplished using database triggers and/or procedures. If the organization already has a rule engine in place, it can be leveraged here.

As part of enhancement, this setup can be improved by addition of small bits like SLA tracking, report generation capability, etc. Report generation capability can help governance in a major way by helping the DG team to track various critical parameters like open requests, SLA breaches, requests moved to production through exception process, etc. This in turn will help the DG team to fine tune processes and improve their maturity.

Moving ahead and taking another process online, say BI Tools & Technology control. The steps shown in figure 3 are applicable here also. The difference would be in steps c and d, where the process is defined and governed.

Some of the other processes that can be brought under the governance control without too much of cost and effort are:

- Database related activities (E.g. New DB creation, index creation)

- Data quality dashboard (E.g. rejected records list pulled from ETL, data certification status)

- Data security (E.g. user access to systems)

- Training tracker

In addition to this, various database triggers can be set to increase response levels. Primary advantage of getting to this stage is that the users would now be conversant with various processes in the organization and adapted to them. Some amount of governance would also have been established to move to the next level.

What next?

The organization can now consider moving to the next level by creating self-healing mechanisms wherever applicable. More advanced tools like say Kalido can be considered if required to improve the DG maturity level.

I’m really bored today hence posting this blog without much thought. Will definitely refine it later. Please do feed me with your comments to improve on this topic.

Posted: December 23rd, 2010

Categories:

Business Intelligence

Tags:

data governance,

dg

Comments:

No Comments.

Abstract

Giving data governance recommendations is easy but implementing them is altogether a different ball game. Organizations do realize the importance and value of data governance initiatives but when it comes to funding and prioritization of such initiatives, it always takes a lower priority. Over a period, governance becomes the root cause of a handful of the organizational issues and it is then when the organizations start to prioritize DG and bring in experts to set things right.

“Are these experts providing what the organization needs?”

“Is the problem of data governance solved when the expert leaves?”

“If not, what is it that is lurking and needs to be addressed?”

This article tries to throw light on the expectations of these organizations, what they get from the experts and how much of the recommendation can be converted or is converted to reality.

The Genesis

Problems related to data governance (DG) usually begin in an organization when investments in technology infrastructure and human resources are considered as assets but not the data itself. Businesses must understand that the backbone of their organization is ‘data’ and their competitiveness is determined by how data is managed internally across functions.

The problem of DG becomes critical as an organization grows in the absence of any formal oversight (i.e., a governance committee). Silos of technology seep in and lead to disruption of the enterprise architecture, followed by processes. In due course, data gets processed by the consumers of data. The impact of this is huge as users can now manipulate and publish data. The ripple effect of this can be felt on processes like change management that will now happen locally at users’ machines rather than going through a formally governed process.

The ‘In-house’ DG Team

The DG team is either dormant or virtual in most cases and often exists at either Level-1 (Lowest) or Level-2 in terms of maturity (on any model). The primary reason for this lack of maturity is the team’s unfamiliarity to DG processes beyond establishing naming standards, documentation of processes, identification of roles & responsibilities and a few other basic steps.

Even if the team is capable of setting up a DG practice, the business teams are not flexible enough to empower it sufficiently to establish a successful governance practice. In some cases, people identified to participate in the DG committee are already engaged in other business priorities such as, a program manager who is already tasked with multiple high-priority corporate development initiatives. This masks the true purpose of a DG team and makes it virtual.

The Problem

Firstly, the in-house teams do not have a well defined approach towards setting up DG processes; those with an approach are unable to define success metrics and those who manage to define the metrics, do not know how to put it into action.

According to the Quality Axiom,

“What cannot be defined cannot be measured;

What cannot be measured cannot be improved, and

What cannot be improved will eventually deteriorate.”

This is very much true in DG also. In DG, it is not enough to define metrics. Organizations need to be able to control and monitor them, too. The problem lies in setting up the infrastructure and control mechanisms capable of continuous monitoring. To solve this, a specialist is usually called for.

The Catch

The recommendations provided by the specialist often look very promising and implementable. The catch lies in the gap between what the organization expects and gets and how much of the recommendations can actually be implemented.

The first part, “Expectations of the organization” relates to the assessment of the existing state of DG and getting recommendations to solve the DG problem. The second part, “What the organization gets,” is where the mismatch happens. Most of the maturity models used by specialists, measure DG along the following five dimensions:

- Enterprise architecture

- Data lifecycle

- Data quality and controls

- Data security

- Oversight (funding, ownership, etc.)

The recommendations provided by these specialists traditionally pivot around these categories, but on a closer look, each of these categories have two components:

- Soft component (Level 1) – the easily implementable ones and

- Hard component (Level 2 & 3) – something which requires funding, effort and time to build

No matter how well the recommendations are categorized and sub categorized, these two components always exist. The soft components involving policy/process changes, re-organization, etc. are relatively easy to set up. However, the hard components that involve infrastructure, database, dashboards, etc. either get ignored or are partially implemented. It is this hard component that defines, “How many of the expert recommendations can be implemented?”

The 3-level approach (Figure 1) works well when the organization is serious, has the required funding, resources and wants to setup DG in a short time frame.

The Reality Check

Implementing DG is not as simple as buying a product off the shelf and installing it. Even specialists sometimes turn down requests for implementing these hard components because of inadequate Enterprise Information Management (EIM) maturity. EIM architecture involves data sourcing, integration, storage and dissemination. Setting up DG processes will be difficult even if one of these components is weak in terms of governance.

There are several pitfalls one must look out for. First, DG initiatives can result in power shifts and can be very difficult for senior management to accept since they have hitherto enjoyed ungoverned process authority. This aversion to change/give up power paves the way for something termed as a failure at Level-1 stage. This is an organization culture issue that needs to be addressed to move further.

Second, normally the specialists quote a price that involves setting up foundation elements such as changing the ETL infrastructure, setting up metadata, fixing master data, etc. to setup DG. The business, instead of considering the cost of getting these foundation elements up and running as individual investments, sees this expenditure as part of DG investment cost and fails to get the required funding. When a multi-year roadmap is proposed and funding is sought in the name of implementing DG every time, sponsors will hardly be enthusiastic about the investment. Also, through the initial phases of a DG engagement, tangible results are difficult to establish and showcase and hence the business might lose trust in the initiative. This can be termed as failure at Level-2 stage.

Conclusion

To enable a mature DG setup, organizations need to step back and take a look at their existing architecture, get the foundational elements in place, and finally push hard to implement the necessary hard and soft DG components. The cost of implementing these foundational elements should not be considered as a part of DG initiative, because doing so will only result in undercutting the priority and interest in this critical undertaking.

Data governance has to be an evolving process in an organization. A big bang overnight implementation of DG might not be always advisable unless the foundational components are in place.

Posted: November 30th, 2010

Categories:

Business Intelligence

Tags:

data governance,

dg,

reality

Comments:

No Comments.

Abstract

Are you faced with questions like,

• ‘Consulting – Is it an Expense or an Investment?’

• ‘Strategy – Is it just a jargon or a savior?’

• ‘Roadmap – Is it on track as planned? How do I do a health check?’

Corporates over a period of time had invested a lot of money in various tools and technologies to cater to the needs of various departments within their organization. This has now grown into a mammoth and has started to eat into their profits through licensing costs, maintenance costs, etc. Sometimes one has lost count of the number of application in the landscape, has no clue what a particular application is for, who is using it and why it was needed in the first place? The impact of this has become even more evident especially during the recession period as the IT budgets became slim and expectations from the top management to cut costs increased. The CIO now needs to strategize the IT spends to manage the cost within the budget. This paper tries to address where the CIO can start and how to leverage the services provided by various IT service providers.

Read on to find out how the recession hit industries can leverage the consulting arm of the IT service providers to cut costs and at the same time get a futuristic view of their architecture / approach.

Recession hit Industry:

During recession, quite a few Corporates had employed IT consultants to increase their productivity and cut costs. They achieved this by trying to get a neutral 3rd party view of where they were and what they needed to do next, to sustain themselves during recession. These corporates were primarily looking at consolidation, rationalization and/or optimization of their existing setup and even re-org in some cases.

Situation in the IT Services industry:

IT service providers realized the need for value added services in the market and forayed into the consulting services spectrum during the recession period. This was not set up just to help the clients come out of recession but also to sustain themselves as the IT budgets were growing thin.

In how many cases has one seen the consulting team go back and check what went right or wrong in their proposed recommendation? Even if everything went right, what is the probability that the users have bought into the solution? The problem is really fixed and users are reaping the benefits?

The real problem here is that the consulting and delivery are two separate arms inside the same organization. There are pros & cons to this setup, and yes, they are different beasts. They need to have separate teams, heads, etc. but to achieve the full potential and for the survival of both these arms in the long run, they have to work hand-in-hand. Gone are the days when CIOs just wanted help in ‘doing it’, now they want to have ‘insights’ into what their competitors are doing. This has lead to a very strong and rapid growth in the consulting arm within the IT services organization.

Let’s ask the question, “Can Consulting survive another recession, if it were to happen in the near term?” The answer would be, consulting might not survive as well as it did in the last recession as most of the companies would now have already or just about gone through a fairly large consolidation, rationalization and/or optimization exercise. So in order to survive the next recession, consulting will now have to start looking at new avenues through which it can find in-roads into their clients and keep the consulting arm from taking a hit.

The Solution:

Typically there is a strategy phase and then there is an implementation phase. The missing piece here is a continual monitoring/improvement phase. While consulting arm takes care of the strategic piece and delivery arm takes care of the implementation, the “Extended consulting” will take care of this continual monitoring/improvement phase and also client relationship.

To sustain the consulting industry in the long run, a strong link between the consulting and delivery arm needs to be created now. The result of this link would be a C-D-EC (Consulting – Delivery – Extended consulting/collaboration) model. The advantage here is twofold. One, it helps the consultants provide better recommendations and customize their existing frameworks. Two, after everything is setup, a minor tweak to the original recommendation based on user feedback can go a long way in terms of user buy-in, higher satisfaction levels, etc. One cannot deny the fact that there is a link between the two arms as of today but one has to understand that this link is weak and not sufficient for survival in the long run.

For pure-play consulting firms, where the implementation is done by another vendor, or cases where the delivery team (implementation team) belongs to a different vendor because the clients’ organization has a policy of not deploying the same vendor for consulting and implementation will have to customize their model to have an in-road back into the organization to Re-assess and Optimize. Now one might ask, “Is it good to have the same vendor for both consulting and delivery?” This is a debatable topic weighing the pros and cons, but the C-D-EC model is fairly isolated from this problem. Who does it, does not matter. Is it done, is what that matters here.

If consulting and delivery is done by the same vendor, the initial homework can be done by providing the consulting arm, access to the delivery team’s repository in-house. This will give the consultants sufficient time to study what was carried out at the clients site based on their recommendations, come up with tweaks and value adds before they go back to the client to make amendments to their earlier proposed recommendations and make it stronger and better. A by-product of this effort is that the consultants now have an opportunity to build better frameworks and processes around their existing frameworks. This can then be put forward to clients who are yet to undergo an optimization or consolidation exercise.

C-D-EC (Consulting – Delivery – Extended Consulting/Collaboration) model

The consulting models followed by various organizations across the globe when mapped to a six-sigma methodology would indicate that it only address a portion of the methodology. When the delivery team takes over and completes the implementation, we can say that 80% of the six-sigma methodology is addressed.

C-D-EC model is based on the lines of six-sigma and addresses the complete DMAIC methodology end-to-end. The engagement starts with the consulting phase (Define-Measure-Analyze) to understand and define the client’s problem, walk-through the client’s existing landscape, measure (quantify) the bottle-necks, and finally analyze the findings by base-lining each identified parameter that is in focus. End of this consulting phase would be the typical recommendations, governance structure, best practices and implementation roadmap.

When the delivery team takes over and completes the implementation based on the proposed recommendations, we can say that the Improve phase of the six-sigma methodology is addressed.

What we have seen till now is what is being followed in the Industry as of today. The Control phase of the six-sigma methodology is either left out completely or addressed in parts through a maintenance and support program. The problem with addressing the control phase through maintenance, and support is that, it takes time to realize the complete benefit of the investment made. Consultants have no way to figure out how effective their recommendation was. For example, the support team can take anywhere between two to five months to just stabilize and familiarize themselves with the environment. This is because the development team normally does not end up as the support team also. Hence, on an average it can take anywhere between four to five months for the support team to figure out possible areas for enhancement and roll it into production. Let’s have a look at how bringing the consulting team back in, post-implementation can reduce this time lag of four to five months and also create some positive impact.

Extended Consulting:

The consulting team is brought back to study the gaps in implementation, understand how the users are interacting with the new setup, collect their feedback and propose an Optimize & Sustain strategy. This could be as simple as tweaking the processes/recommendations proposed initially or bringing in a completely new perspective like a collaboration that could take the entire setup to a completely new level. Under normal circumstances, this extended consulting would lead to the formulation of a continuous, repeatable and robust framework which the client could reuse on a day-to-day basis using its internal team. This fills the missing piece in the six-sigma methodology, the Control phase.

This phase can be included as part of the existing consulting models of various organizations at a small or no cost at all to the client depending on various factors like size of the implementation, relationship with the client, knowledge leveraged, etc.

Benefits of CDEC over CD

As explained earlier, the overall benefit of this model is the ability to Re-assess and re-align the roadmap in large deals, especially roadmaps which are for a period of two years and above. It also gives the ability to fine tune the solution proposed keeping the current market situation in mind.

On the other hand, it gives the consultants and in-road back into the client’s organization to look for new business opportunities and also sustain a long term relationship.

Framework for Extended Consulting

Now that we have brought the consulting team back in, questions like where should one start, how should we go about doing this extended consulting phase, can we follow the same methodology followed initially, etc will arise. One cannot follow the same methodology as it will become an over burden / over doing the whole thing. Hence the ideal answer would be a downplayed version of the original consulting model any vendor follows.

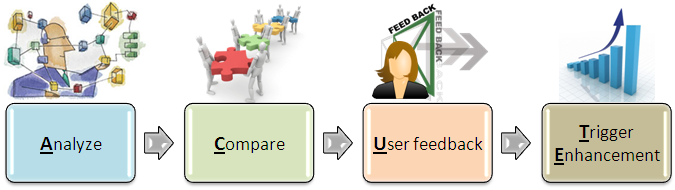

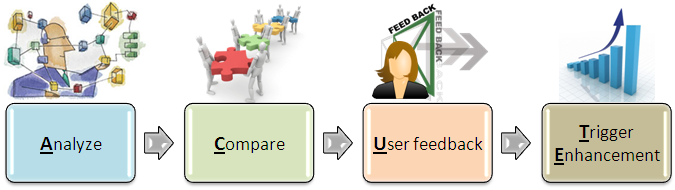

The primary factors, this downplayed version needs to address is given by the ACUTE methodology below.

ACUTE (Analyze, Compare and gather User feedback to Trigger Enhancement)

Analyze

The setup, post implementation is studied at a high level to check for completeness and accuracy with which the implementation has been done. This phase is purely from a technical perspective.

Compare

The details collected in the analyze phase is now compared against the recommendations given. The gaps if any are identified along with possible reasons for the same and impact of each gap identified. It would be an added advantage to interact with the development team(s) directly and get certain clarifications on these possible mismatches.

User Feedback

Key users feedback is collected on parameters like usability, productivity and user friendliness as the primary parameters. Only the actual users of the implementation will be part of this phase.

Trigger Enhancement

This is the phase where the findings from the Compare phase and feedback from users are studied and alternate solutions and/or tweaks to the earlier proposed recommendations/processes wherever required is drafted. In some cases, a re-usable framework customized for the client need can be generated and given to them which their in-house team can leverage.

Thanks to KrishnaKumar who helped me with his valuable feedback to come out with this model.

Posted: November 15th, 2010

Categories:

Consulting

Tags:

cdec,

consulting,

framework

Comments:

No Comments.